Anyone who has had to deal with scientific literature must have encountered Postscript (".ps") files. Although the popularity of this format is gradually fading behind the overwhelming use of PDFs, you can still find .ps documents on some major research paper repositores, such as arxiv.org or citeseerx. Most people who happen to produce those .ps or .eps documents, do it using auxiliary tools, most commonly while typesetting their papers in LaTeX, or while preparing images for those papers using a vector graphics editor (e.g. Inkscape). As a result, Postscript tends to be regarded by the majority of its users as some random intermediate file format, akin to any of the myriad of other vector graphics formats.

I find this situation unfortunate and unfair towards Postscript. After all, PS is not your ordinary vector graphics format. It is a fully-fledged Turing-complete programming language, that is well thought through and elegant in its own ways. If it were up to me, I would include a compulsory lecture on Postscript into any modern computer science curriculum. Let me briefly show you why.

Stack-based programming

Firstly, PostScript is perhaps the de-facto standard example of a proper purely stack-based language in the modern world. Other languages of this group are nowadays either dead, too simple, too niche, or not practical. Like any stack language, it looks a bit unusual, yet it is simple to reason about and its complete specification is decently short. Let me show you some examples:

2 2 add % 2+2 (the two numbers are pushed to the stack,

% then the add command pops them and replaces with

% the resulting value 4)

/x 2 def % x := 2 (push symbol "x", push value 2,

% pop them and create a definition)

/y x 2 add def % y := x + 2 (you get the idea)

(Hello!) show % print "Hello!"

x 0 gt {(Yes) show} if % if (x > 0) print "Yes"

Adding in a couple of commands that specify font parameters and current position on the page, we may write a perfectly valid PS file that would perform arithmetic operations, e.g:

/Courier 10 selectfont % Font we'll be using

72 720 moveto % Move cursor to position (72pt, 720pt)

% (0, 0) is the lower-left corner

(Hello! 2+2=) show

2 2 add % Compute 2+2

( ) cvs % Convert the number to a string.

% (First we had to provide a 1-character

% string as a buffer to store the result)

show % Output "4"

Computer graphics

Postscript has all the basic facilities you'd expect from a programming language: numbers, strings, arrays, dictionaries, loops, conditionals, basic input/output. In addition, being primarily a 2D graphics language, it has all the standard graphics primitives. Here's a triangle, for example:

newpath % Define a triangle

72 720 moveto

172 720 lineto

72 620 lineto

closepath

gsave % Save current path

10 setlinewidth % Set stroke width

stroke % Stroke (destroys current path)

grestore % Restore saved path again

0.5 setgray % Set fill color

fill % Fill

Postscript natively supports the standard graphics transformation stack:

/triangle { % Define a triangle of size 1 n the 0,0 frame

newpath

0 0 moveto

1 0 lineto

0 1 lineto

closepath

fill

} def

72 720 translate % Move origin to 72, 720

gsave % Push current graphics transform

-90 rotate % Draw a rotated triangle

72 72 scale % .. with 1in dimensions

triangle

grestore % Restore back to non-scaled, non-rotated frame

gsave

100 0 translate % Second triangle will be next to the first one

32 32 scale % .. smaller than the first one

triangle % .. and not rotated

grestore

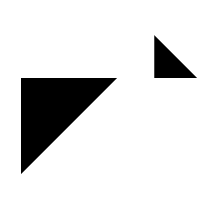

Here is the result of the code above:

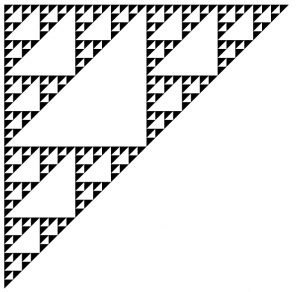

The most common example of using a transformation stack is drawing fractals:

/triangle {

newpath

0 0 moveto

100 0 lineto

0 -100 lineto

closepath

fill

} def

/sierpinski {

dup 0 gt

{

1 sub

gsave 0.5 0.5 scale dup sierpinski grestore

gsave 50 0 translate 0.5 0.5 scale dup sierpinski grestore

gsave 0 -50 translate 0.5 0.5 scale sierpinski grestore

}

{ pop triangle }

ifelse

} def

72 720 translate % Move origin to 72, 720

5 5 scale

5 sierpinski

With some more effort you can implement nonlinear dynamic system (Mandelbrot, Julia) fractals, IFS fractals, or even proper 3D raytracing in pure PostScript. Interestingly, some printers execute PostScript natively, which means all of those computations can happen directly on your printer. Moreover, it means that you can make a document that will make your printer print infinitely many pages. So far I could not find a printer that would work that way, though.

System access

Finally, it is important to note that PostScript has (usually read-only) access to the information on your system. This makes it possible to create documents, the content of which depends on the user that opens it or machine where they are opened or printed. For example, the document below will print "Hello, %username", where %username is your system username:

/Courier 10 selectfont

72 720 moveto

(Hi, ) show

(LOGNAME) getenv {} {(USERNAME) getenv pop} ifelse show

(!) show

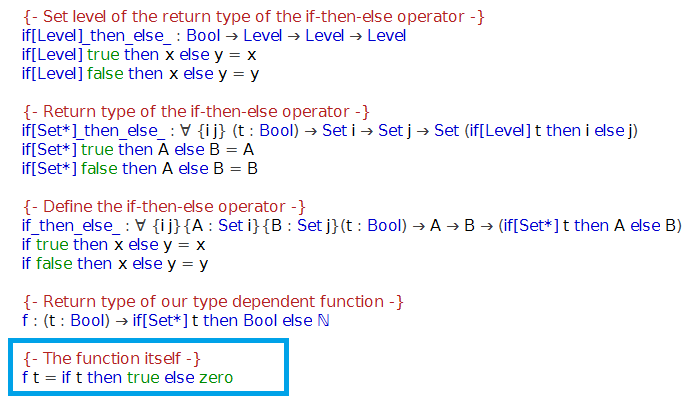

I am sure, for most people, downloading a research paper from arxiv.org that would greet them by name, would probably seem creepy. Hence this is probably not the kind of functionality one would exploit with good intentions. Indeed, Dominique has an awesome paper that proposes a way in which paper reviewers could possibly be deanonymized by including user-specific typos in the document. Check out the demo with the source code.

I guess this is, among other things, one of the reasons we are going to see less and less of Postscript files around. But none the less, this language is worth checking out, even if only once.